AWS Cost Optimization Best Practices to Reduce Cloud Spend

Switching to AWS cloud can lead to significant cost reduction. However, it’s easy to overspend without proper cloud cost optimization. Unnoticed expenses, such as unused instances, obsolete snapshots, and unattached volumes, can inflate your AWS bill. This post explores AWS cost optimization strategies to save money and also makes your infrastructure leaner and more efficient, improving performance and user experience.

Table of Contents

Using the cloud efficiently is no longer just about keeping infrastructure running – it is about making sure every dollar spent supports actual business needs. As AWS environments grow, costs often become harder to track and easier to waste through oversized resources, idle services, poor pricing choices, or architecture decisions that no longer match workload demands.

AWS cost optimization is the process of bringing spending, usage, and infrastructure design into alignment. Done well, it helps businesses reduce unnecessary costs, improve resource efficiency, and make better operational decisions. In this article, we break down the AWS cost optimization best practices that help control cloud spend, improve cost visibility, and build a more efficient AWS environment.

Table of Contents

Why Cloud Cost Reduction is Important

Cloud cost reduction is not only about lowering your AWS bill. It is about making sure your infrastructure stays efficient, scalable, and aligned with actual business needs. As AWS environments grow, it becomes easier to lose visibility into spending and harder to control waste. A structured cost optimization approach helps maintain financial control while improving operational efficiency.

Improve Financial Efficiency

As your infrastructure expands, small unnecessary costs can quietly accumulate into a significant monthly expense. Unused instances, obsolete snapshots, unattached volumes, and idle services often go unnoticed but continue generating charges. Identifying and removing these inefficiencies helps reduce unnecessary spend and improves budget predictability.

Optimize Resource Utilization

Cost optimization often goes hand in hand with better resource management. Rightsizing instances, matching capacity to demand, and selecting the right storage and pricing models help eliminate waste without affecting performance. A leaner infrastructure is easier to manage and often delivers better application performance and user experience.

Strengthen Operational Visibility

Reviewing AWS costs in detail gives better insight into how workloads consume resources. It helps teams understand where money is being spent, which services drive the highest costs, and where inefficiencies exist. This visibility supports better architectural and operational decisions over time.

Enable Proactive Infrastructure Management

The process of optimizing cloud costs encourages a more proactive approach to infrastructure management. Instead of reacting to rising bills, teams can monitor usage trends, detect anomalies early, and automate controls to prevent waste before it grows. This leads to more predictable cloud spending and stronger long-term cost control.

AWS Cost Optimization Best Practices

Let’s start off our deep dive into AWS cost monitoring. Our specialists have hand-picked AWS cost-saving strategies to help you get acquainted with the various ways of reducing your end-of-the-month bill:

Choose the Right Pricing Models

Pricing model selection is one of the fastest ways to reduce AWS spend without changing architecture. Before rightsizing or re-architecting, try to cut costs simply by aligning your workloads with the right purchasing model.

Reserved Instances for Predictable Usage

EC2 Reserved Instances (RI) provide discounted pricing for predictable compute usage at a discounted rate. With RI’s you can save up to 75% of the original on-demand instance prices. RI’s come in two types – Standart and Convertible. The main difference is that convertible RI’s let you leverage future instance families, though they come with a smaller discount.

There are three types of payment options. They are:

- Upfront

- Partial Upfront

- No Upfront

With Partial or No Upfront payment option, the remaining balance will be due in monthly increments over the term, which is rather convenient.

Note that you can reserve a lot of things rather than only Amazon EC2 instances. It’s possible to reserve Amazon RDS, Amazon Elasticache, Amazon Redshift, Amazon DynamoDB, and much more.

Utilizing Spot Instances for Flexible Workloads

Amazon EC2 Spot Instances provide access to unused EC2 compute capacity at discounts of up to 90% compared to On-Demand pricing. They are a strong fit for flexible, fault-tolerant workloads that can handle interruptions, such as batch processing, data analysis, CI/CD jobs, or other non-time-sensitive tasks.

The tradeoff is that AWS can reclaim Spot capacity at any time, providing only a two-minute interruption notice. To use Spot Instances effectively, workloads should be designed to tolerate disruption or resume automatically. With proper monitoring, automation, and tools such as AWS Spot Fleet, businesses can manage interruptions and maintain the required capacity while significantly reducing infrastructure costs.

Upgrade Instances to the Latest Generation

Another effective strategy to reduce AWS costs is to upgrade your instances to the latest generation. AWS regularly releases new generations of instances that offer better performance, functionality, and cost-effectiveness compared to their predecessors. By upgrading, you can enjoy these benefits while potentially reducing your costs.

The latest generation instances often provide improved performance at the same or even lower price. This means you can maintain or enhance your application’s performance while reducing your AWS bill. Additionally, newer instances often come with features that are not available in older generations, such as enhanced networking capabilities, better CPU performance, and increased instance storage.

To upgrade instances to the latest generation:

- Open AWS Management Console and navigate to the EC2 dashboard.

- In the navigation pane, choose “Instances”.

- Identify any instances that are not the latest generation. You can do this by checking the instance type, which indicates the generation of the instance.

- Stop the instance, change the instance type to the latest generation, and then start the instance again. Please note that stopping and starting an instance will change the public IP address of the instance unless you have an Elastic IP address associated with it.

Match Capacity with Demand

With a thorough analysis of your instances and traffic, be sure to know your exact capacity needs in order to not overspend on reserved instances you may never come round to utilize. To better understand your infrastructure usage and align capacity with actual demand, use the following AWS tools and features:

- AWS Cost Explorer Resource Optimization identifies underutilised or idle resources and highlights rightsizing opportunities to reduce waste.

- AWS Instance Scheduler automatically starts and stops instances based on schedules, helping avoid paying for resources outside working hours.

- AWS Systems Manager OpsCenter helps detect, investigate, and automate responses to operational issues affecting resource efficiency.

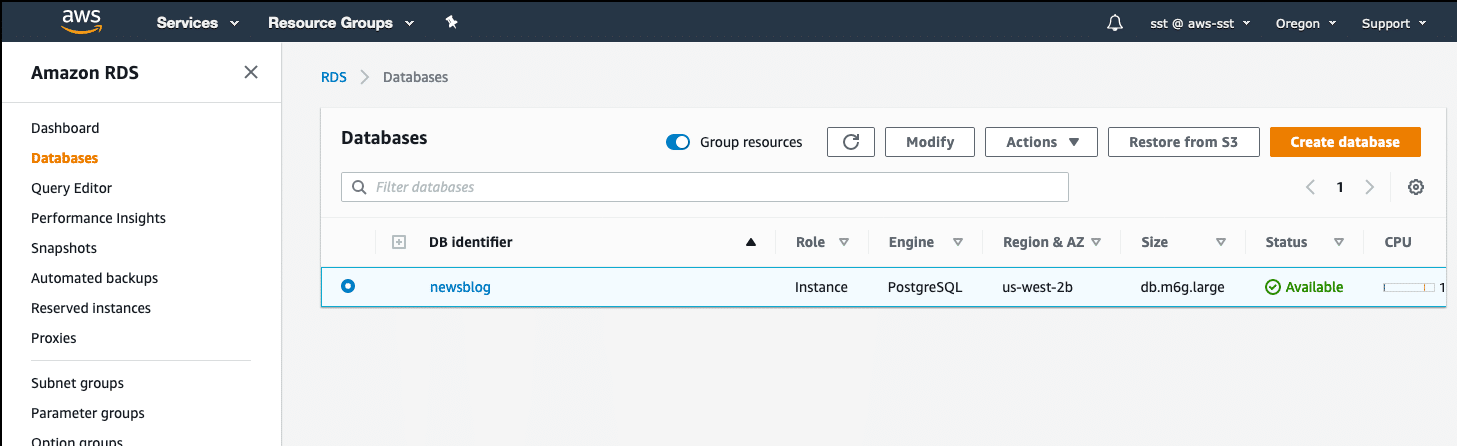

- Amazon RDS Idle DB instance checks find database instances with low or no activity so they can be resized, paused, or removed.

- Amazon EC2 Auto Scaling automatically scales capacity up or down based on traffic or performance metrics, so you only pay for what you need.

Use the Right Storage Options

Allocating your data to different storage options is a great way to save data. The less frequently your data is accessed, the less it costs to store it. AWS is known for its diverse storage options – the current S3 storage tiers go as follows:

- S3 Standard – for general-purpose storage of frequently accessed data

- S3 Intelligent-Tiering – for data with unknown or changing access patterns

- S3 Standard-Infrequent Access and S3 One Zone-Infrequent Access – for long-lived, but less frequently accessed data

- Amazon S3 Glacier Instant Retrieval, Amazon S3 Glacier Flexible Retrieval and Amazon S3 Glacier Deep Archive – for long-term archive and digital preservation.

Note that Amazon S3 also offers capabilities to manage your data throughout its lifecycle. Research the S3 Lifecycle policy and the optimal ways to utilise it. Pay close attention to your existing data and move it to different storage tiers according to its access frequency to further optimize costs.

Terminate Any Unused Assets

It can be easy to look past some unused or underutilized assets. AWS Trusted Adviser can help greatly with monitoring such instances to later get rid of (or move to a more appropriate tier) anything that hasn’t been used in a while.

Some of those so-called “zombie assets” can be:

- unattached EBS volumes

- obsolete snapshots

- unused Elastic Load Balancers

- unattached Elastic IP addresses

- Instances with no network activity over the last week.

Releasing Unattached Elastic IP Addresses

Elastic IP addresses are a static, public IPv4 address designed for dynamic cloud computing. While one Elastic IP address associated with a running instance is free, any additional Elastic IP addresses are charged on a per-hour basis. This means that any unattached Elastic IP addresses can quickly add up to your AWS costs.

To avoid these unnecessary costs, it’s important to regularly review your Elastic IP usage and release any addresses that are not associated with a running instance. This can be done manually through the AWS Management Console, or you can automate the process using AWS scripts or third-party tools. By managing your Elastic IP usage effectively, you can ensure that you are only paying for the resources that you are actually using.

Implement Processes to Identify Resource Waste

AWS provides an assortment of tools to automate your processes. Automatization will not only help reduce costs but will also take a great deal of workload from your cloud governance team. Below we listed the best practices and services to better identify resource waste to later get rid of it:

- Use AWS Trusted Advisor checks to identify underutilized Amazon EBS volumes, idle load balancers, unused Elastic IP addresses, and low-utilization EC2 instances

- Snapshot underutilized volumes if needed, then delete them to stop unnecessary storage charges

- Automate snapshot creation, retention, and deletion using Amazon Data Lifecycle Manager

- Use AWS Compute Optimizer to get rightsizing recommendations for EC2 instances, EBS volumes, Lambda functions, and some database workloads

- Use AWS Cost Explorer Resource Optimization and cost trends to detect idle or oversized resources and identify spending anomalies

- Use AWS Cost Anomaly Detection to automatically detect unusual cost spikes or unexpected usage patterns

- Use Amazon CloudWatch metrics and alarms to identify low-utilization instances and trigger automated remediation workflows

- Use Amazon S3 Analytics and Amazon S3 Storage Lens to analyze storage access patterns and identify inefficient storage usage

- Automate moving objects into lower-cost storage tiers using S3 Lifecycle Policies

- Use Amazon S3 Intelligent-Tiering to automatically move data to the most cost-effective storage class

- Use AWS Instance Scheduler to automatically stop non-production instances outside working hours

- Use AWS Cost Optimization Hub to view and prioritize cost-saving recommendations across AWS accounts and services.

Schedule On/Off Times

Schedule on/off times for non-production instances (development, testing, etc.). only leaving such instances “on” during working hours Monday to Friday will save lots by itself. But consider researching more and turning certain instances “off” even during lunchtime. Thorough scheduling will save even more money for projects with irregular development hours.

Use Consolidated Billing

Consolidated billing is a feature that combines expenses from all AWS member accounts and charges them all at once from the AWS master account. This feature is free to use and has a number of benefits. For instance, you can combine usage from all accounts and take advantage of various large-volume discounts. Also, seeing the combined cost and usage data from all accounts makes it easier to track.

Upgrade Architecture

Optimizing your architecture is a critical component in your strategy to reduce AWS costs. There are several dimensions and characteristics of a solution to consider, such as availability, performance, and resilience. However, AWS cost optimization is a crucial aspect that requires a thoughtful approach to your AWS workloads. Here are some strategies to help you upgrade your architecture and reduce AWS costs.

Migrating AWS Lambda Functions to Arm-based AWS Graviton2 Processors

AWS Graviton processors, which are based on the Arm processor architecture, are custom silicon designed by Amazon’s Annapurna Labs. They are optimized for performance and cost, allowing customers to achieve up to 34% better price performance. Many serverless workloads can benefit from Graviton2, especially those not reliant on libraries requiring an x86 architecture. By migrating your AWS Lambda functions to Graviton2 processors, you can significantly reduce AWS costs.

Leveraging Graviton2 for Amazon RDS and Amazon Aurora Databases

Amazon Relational Database Service (Amazon RDS) and Amazon Aurora support a multitude of instance types to scale database workloads based on needs. Both services now support Arm-based AWS Graviton2 instances, which provide up to 52% price/performance improvement for Amazon RDS open-source databases, and up to 35% price/performance improvement for Amazon Aurora, depending on database size. By moving to Graviton2 for your Amazon RDS and Amazon Aurora Databases, you can optimize your architecture and reduce AWS costs.

Establishing a FinOps Culture in Your Entire Organization

Establishing a FinOps culture and making use of Service Control Policies (SCPs) can help control ongoing costs and guide deployment decisions, such as instance-type selection. Tools like AWS Cost Explorer can identify opportunities for optimizations, including data transfer, storage in Amazon Simple Storage Service and Amazon Elastic Block Store, idle resources, and the use of Graviton2.

AWS Cost Management Tools

AWS provides a number of free, useful management tools. It is a good idea to familiarize yourself with them as they can provide valuable insight into your cloud infrastructure. Here we listed the the most useful AWS tools for cost reduction:

AWS Billing and Cost Management Console

Think of this as the starting point for understanding your AWS bill. It centralizes invoices, payment history, tax settings, and overall account-level spending in one place. Its dashboard widgets help track billing trends over time and monitor Savings Plans and Reserved Instance utilization, making it easier for finance and cloud leaders to quickly spot unusual increases or underused commitments.

AWS Cost Explorer

When you need to understand exactly where the money is going, Cost Explorer is often the first place to investigate. It visualizes historical usage and spending trends, lets you group costs by service, region, account, usage type, or tags, and helps forecast future spending. Features like hourly granularity, resource-level insights, and cost comparison reports make it valuable for identifying cost drivers. With Amazon Q integration, teams can now ask natural-language questions about spend and receive AI-assisted analysis.

AWS Budgets

Rather than simply monitoring spend, AWS Budgets helps enforce financial limits. Teams can define thresholds for overall cost, usage, Reserved Instance coverage, or Savings Plans utilization, and trigger alerts before budgets are exceeded. It can also automate responses, such as stopping resources or applying policy restrictions, which makes it useful for organizations with strict governance requirements.

AWS Trusted Advisor

If your goal is to quickly identify obvious waste, Trusted Advisor is one of the easiest tools to use. It continuously scans your environment and highlights inefficiencies such as idle load balancers, unattached Elastic IP addresses, low-use EBS volumes, and underutilized EC2 instances. It often surfaces quick cost-saving wins without requiring deep manual analysis.

Amazon CloudWatch

CloudWatch helps connect infrastructure usage to cost decisions. By tracking CPU, memory, network traffic, and custom application metrics in real time, it can reveal overprovisioned resources or unexpected spikes in demand. Alarms and automated triggers allow teams to scale resources, shut down idle systems, or launch remediation workflows automatically.

AWS Cost and Usage Report

For teams that need full billing transparency, this is the most detailed dataset AWS provides. It breaks down costs by hour, day, resource, service, account, and custom tags, then delivers the raw data to Amazon S3. From there, it can be queried with Athena, visualized in QuickSight, or connected to third-party FinOps platforms for deeper reporting and analysis.

AWS Cost Anomaly Detection

Unexpected spikes can be expensive if no one notices them early. This service uses machine learning to establish normal spending patterns and alerts teams when unusual cost or usage behavior appears. It is particularly useful for catching runaway workloads, deployment mistakes, or sudden changes before month-end bills arrive.

AWS Compute Optimizer

Overprovisioning is one of the most common sources of waste in AWS environments. Compute Optimizer analyzes historical utilization and recommends better-sized EC2 instances, EBS volumes, Lambda memory allocations, ECS tasks on Fargate, and selected database workloads. It is especially valuable for engineering teams balancing performance and cost.

AWS Cost Optimization Hub

For organizations managing multiple accounts or large environments, this service consolidates recommendations from across AWS into one place. It aggregates insights from Compute Optimizer, Trusted Advisor, and commitment usage analysis, then prioritizes opportunities based on estimated savings. Recent additions such as Cost Efficiency metrics make it easier to track optimization progress over time.

How Romexsoft Helps with AWS Cost Optimization

At Romexsoft, we help businesses reduce AWS spend without compromising performance, security, or scalability. Our AWS cost optimization services combine engineering expertise with financial visibility to uncover savings opportunities and improve long-term cost control.

Our AWS-certified experts perform a detailed cost assessment to identify major cost drivers, inefficient resource usage, and missed optimization opportunities. Based on the findings, we deliver a prioritized action plan with measurable savings estimates and practical recommendations backed by engineering analysis.

We cover the full range of cost optimization services:

- Cost assessment starts with a full billing and account review that surfaces waste, anomalies, and the main cost drivers behind your AWS spend. The output is a clear picture of current costs and a prioritized list of actions to reduce them.

- Compute rightsizing targets utilization across EC2, RDS, EKS, ECS, and ElastiCache, adjusting instance types, enabling Graviton migration, and applying Spot configuration and scheduled scaling for non-production environments.

- Storage cost optimization reviews your full Amazon S3, Amazon EBS, and snapshot footprint, restructures retention and tiering policies based on actual access patterns, and automates lifecycle transitions to maintain long-term savings.

- Unused resource cleanup identifies idle compute, detached storage, orphaned networking resources, and abandoned environments, then removes them. Tagging enforcement and governance guardrails are applied to prevent the same waste from rebuilding.

- Cost visibility and monitoring adds automated anomaly detection, cost allocation dashboards, monthly reviews, and scheduling policies so unusual spending is caught early and optimization opportunities stay visible.

Romexsoft helps keep cloud spending efficient and under control whether you need a simple assessment or comprehensive long-term FinOps services. Financial operations goes beyond one-time cost-cutting by helping organizations proactively measure usage, forecast spend, optimize resources, and align cloud investment with business priorities.

Our Cloud Financial Management services establish processes for cost allocation, budgeting, anomaly detection, and continuous optimization, giving teams better visibility into cloud costs and stronger control as AWS environments evolve.

Frequently Asked Questions

How do I know if we are overspending on AWS?

You may be overspending on AWS if your cloud costs keep rising without a clear link to business growth, traffic, or product usage. Common signs include underutilized or idle resources, oversized instances, paying On-Demand rates for steady workloads, and poor cost visibility due to missing tags or unclear allocation. Unexpected billing spikes or higher costs during non-peak periods can also signal inefficiencies. A structured AWS cost assessment can help uncover waste, identify key cost drivers, and define practical ways to reduce spend.

What are some common mistakes that lead to higher AWS costs?

While the article provides a comprehensive guide on best practices for AWS cost optimization, users might be interested in understanding common pitfalls that lead to unnecessary expenses. This could include things like not properly shutting down unused instances, over-provisioning resources, or not taking full advantage of AWS's pricing models.

How to automate the process of AWS cost optimization?

We recommend automating the cost optimization by combining monitoring, intelligent recommendations, and automated actions. Use tools like AWS Cost Explorer, AWS Compute Optimizer, and AWS Cost Anomaly Detection to continuously identify waste, oversized resources, and unusual spending patterns. Then use Amazon CloudWatch, AWS Lambda, and Amazon EventBridge to automatically trigger actions such as shutting down idle instances, resizing resources, or notifying teams.

You can also automate scaling with Amazon EC2 Auto Scaling and schedule non-production environments to stop outside working hours. For long-term control, implement FinOps processes with tagging, budgets, and regular reviews to keep costs optimized as your environment grows.

How does right-sizing service choices contribute to cost optimization?

Right-sizing helps optimize AWS costs by matching resource types and sizes to actual workload requirements instead of paying for excess capacity. Businesses often overprovision compute, storage, or database resources “just in case,” which leads to unnecessary spend. By analyzing real usage metrics such as CPU, memory, network, and storage performance, you can downsize underutilized resources, upgrade overloaded ones, or switch to more cost-effective instance families.